Abstract

Feed-forward 3D generative models like the Large Reconstruction Model (LRM) have demonstrated exceptional generation speed. However, the transformer-based methods do not leverage the geometric priors of the triplane component in their architecture, often leading to sub-optimal quality given the limited size of 3D data and slow training. In this work, we present the Convolutional Reconstruction Model (CRM), a high-fidelity feed-forward single image-to-3D generative model. Recognizing the limitations posed by sparse 3D data, we highlight the necessity of integrating geometric priors into network design. CRM builds on the key observation that the visualization of triplane exhibits spatial correspondence of six orthographic images. First, it generates six orthographic view images from a single input image, then feeds these images into a convolutional U-Net, leveraging its strong pixel-level alignment capabilities and significant bandwidth to create a high-resolution triplane. CRM further employs Flexicubes as geometric representation, facilitating direct end-to-end optimization on textured meshes. Overall, our model delivers a high-fidelity textured mesh from an image in just 10 seconds, without any test-time optimization.

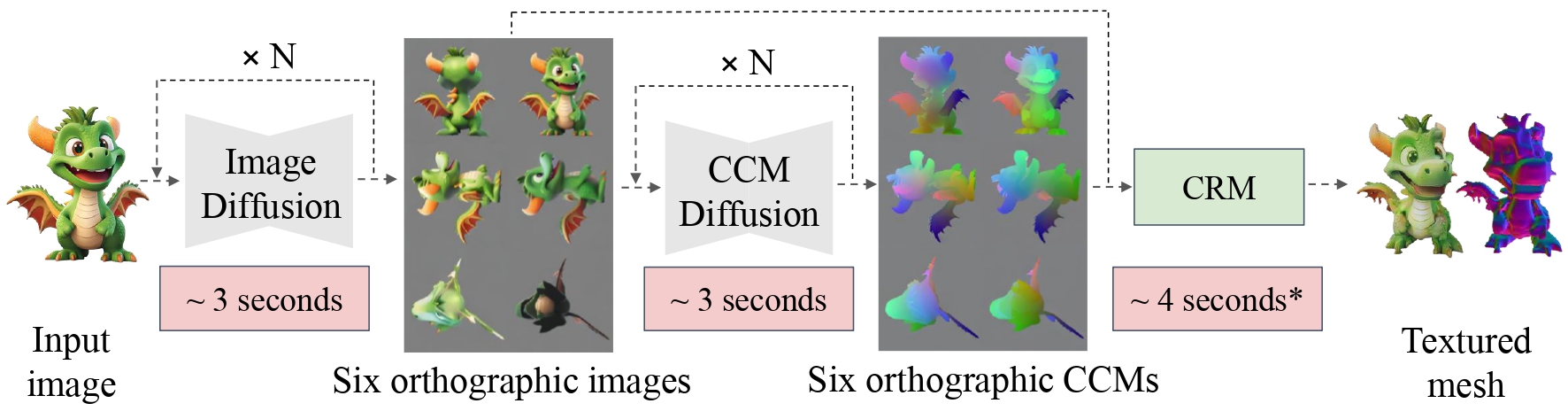

Figure 1 Overall pipeline of our method. The input image is fed into a multi-view image diffusion model to generate six orthographic images. Then another diffusion model is used to generate the CCMs conditioned on the six images. The six images along with the CCMs are send into CRM to reconstruct the final textured mesh. The whole inference process takes around 10 seconds on an A800 GPU. *The 4 seconds includes the U-Net forward (less than 0.1s), querying surface points for UV texture and file I/O.